Optimization - DNA - Mathematical Sciences

DNA faculty working on optimization

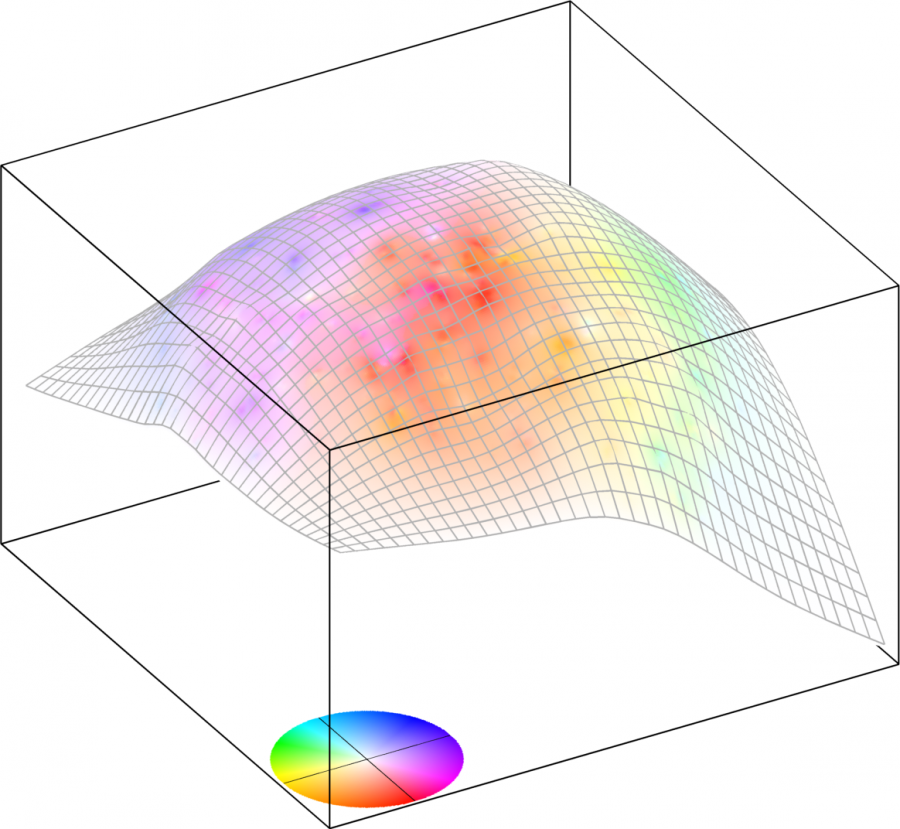

Ronny Bergmann is working on optimization on Riemannian manifolds. Many applications with nonlinear data can be phrased as optimization problems defined on a Riemannian manifold. The corresponding methods take the geometry into account to hence solve an unconstrained problem on the manifold. A main topic is to develop fast and efficient algorithms, especially for high-dimensional manifolds and nonsmooth cost functions. The developed algorithms and considered manifolds are implemented in Manifolds.jl and Manopt.jl.

Markus Grasmair is working on inverse problems and image processing. In particular, he is interested in the analysis of sparsity-enhancing regularisation methods related to total variation regularisation and non-convex variants. Such problems typically lead to non-smooth optimisation problems or variational inclusions on Hilbert or Banach spaces, which can be tackled with ideas from (generalised, abstract) convex analysis. In addition, he works on applications of shape spaces in inverse problems and imaging.

Elisabeth Köbis specializes in set optimization, a modern branch of mathematics dealing with objective and constraint mappings as set-valued maps in various spaces. In finite dimensions, it involves multiobjective optimization, where conflicting criteria are simultaneously minimized, common in real-world problems like financial portfolio selection. In infinite-dimensional spaces, it is known as vector optimization, focusing on functional spaces. Elisabeth also applies set optimization to programming under uncertainty and relations to vector variational inequalities.